The Wellcome Trust is considering funding a tool that would report on the FAIR status of research outputs. We recently responded to their Request for Information with some ideas to refine their initial plan and thought we’d share them here!

a) Include Openness Assessment

[Figure source]

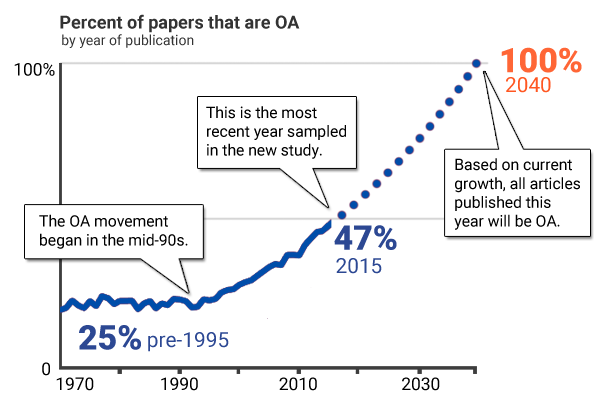

This refinement is essential for several reasons. First, we believe researchers will be expect something called a “FAIR assessment” to include assessing Openness, and will be confused when it does not, leading to poor understanding of the system. Second, the benefit of openness is clear to everyone and increases the motivation of the project to researchers. Third, Wellcome has done a great job of highlighting the need for openness already and so it helps the tool be an incremental addition to the work they have done rather than a different, new set of requirements with an unclear relationship. Fourth, an openness assessment tool is needed by the community, and would fit very well in the proposed tool, and its anticipated popularity and exposure would help the FAIR assessment gain traction.

b) Require the tool produce Open Data, not just be Open Source

The project brief was very clear that the tool needs to be Open Source, with a liberal license. This is great. We suggest the brief needs to add that the data provided by the tool will be Open Data. Ideally the brief would suggest a license for the data (CC0, or an open database license which facilitates reuse including commercial reuse) and data delivery specifications. For data delivery we suggest both regular full data dumps and also a machine-readable free open JSON API which requires minimal registration, is high performing (< 1 second response time), can handle a high concurrent load, has high daily quota limits, and can handle at least a million calls per day across the system.

It could also specify that money could be charged for Support-Level Agreements for the API for institutions who want that, or for above-normal quotas on the API, for more common data dumps, or similar. This is similar to our Unpaywall open data model which has worked very well.

c) Pre-ingest hundreds of millions of research objects

The project brief should make it more explicit that the software tool needs to launch with pre-calculation of scores/badges of a hundreds of millions of research objects. We luckily live in a world where many research objects are already listed in repositories like Crossref, DataCite, Github, etc. These should be ingested and form the basis of the dataset used by the tool. This pre-ingesting is implicitly needed to do some of the leaderboards and aggregations specified by the brief: in our opinion it should be more explicit. It will also allow large-scale calibration of scores, large-scale datasets to be exported to support policy research, additional tools, etc, and would assure a high-performing system which can not be assured when FAIR assessments are made ad-hoc upon request for most products.

(Admittedly gathering research objects registered in such sources naturally selects research objects that have identifiers, and a certain standard and kind of metadata and FAIR level, so it isn’t representative of all research objects — this needs to be considered when using it for calibration)

d) More details on aggregation

The brief doesn’t include enough details on aggregation. In our opinion aggregation is key.

Aggregation supports context for FAIR metrics and badges (through percentiles etc), facilitates publicity, inspires change and improvement, etc. Most research objects do not have metadata that supports interesting aggregation right now — datasets are rarely associated with an ORCID or institution, etc. RFPs should specify how they will facilitate aggregation. We anticipate the proposals will include combination of automated approaches using metadata (use crossref and datacite metadata, and pubmed linkout data, to associate datasets with papers, which are themselves associated with ORCIDs and clinical trial IDs and GRID institutional identifiers) and text mining (to associate github links with papers) etc, and methods for CSV uploads to link identifiers to aggregation groups

e) Include Actionable Steps for immediate FAIR score improvement

The brief should specify that after showing them their scores, the tool links researchers to actionable steps that they should take to improve their FAIR and Open Data scores. These could simply be How-to guides — how to put your software on Github, how to specify a license for your dataset, how to make your paper Open Access via uploading the accepted manuscript etc. They should walk the researcher through how to improve their score on existing products, and then immediately recalculate the FAIR score so the researcher can see progress. If this sort of recalculation ability is not built in to the design from the beginning it can be lead to system designs which make it difficult to add later.

f) Open grants process for this RFI

The RFP should give applicants the option to make their proposals public (and encourage them to do so), and the grant reviews should be public. Or at least make steps forward on this, in the spirit of incremental improvement on the Wellcome’s great Open Research Fund mechanisms.